Jun 13, 2023

Author(s)

In an era where the hunger for data is driving an exponential surge in computational demand, organizations are realizing the need for power-efficient commodity compute to support artificial intelligence (AI) workloads. This is the basis of our work at Neural Magic, as we help customers optimize IT infrastructure to support AI projects and to drive success for business initiatives.

At Neural Magic, we believe in the simplicity and power of software. Optionality is key for customers, along with the ability to maximize output and use of the hardware they’ve chosen to invest in. We enable users to realize the full potential of commodity CPUs for AI processes like inferencing. Our inference engine, DeepSparse, was designed from the ground up to efficiently and performantly execute state-of-the-art machine learning models on CPUs. In our recent blog post, we highlighted impressive performance results on AMD EPYC processors, and today, we're excited to reveal additional performance results on the latest, no-compromise 4th Gen AMD EPYC processors. Based on AMD “Zen 4c” architecture, the new processors feature high core density, needed to balance cloud-native workload growth, making it a compelling option for space-constrained data centers.

“Neural Magic’s DeepSparse inference runtime is truly pushing the boundaries of AI inference performance density on CPUs,” said Kumaran Siva, corporate vice president, Strategic Business Development, AMD. “The synergy between the compute-efficiency of their sparse inference and the remarkable price per core improvements delivered by our latest 4th Gen AMD EPYC™ processors, delivers stellar value to customers. This collaboration showcases the promise of delivering impressive performance, flexibility, and affordability all at once, offering a transformative leap in how we perceive and utilize AI in the datacenter — ultimately enabling customers to accomplish more while keeping energy efficiency and cost-effectiveness at the forefront of their operations."

Results Reveal Impressive Performance and Power Efficiency

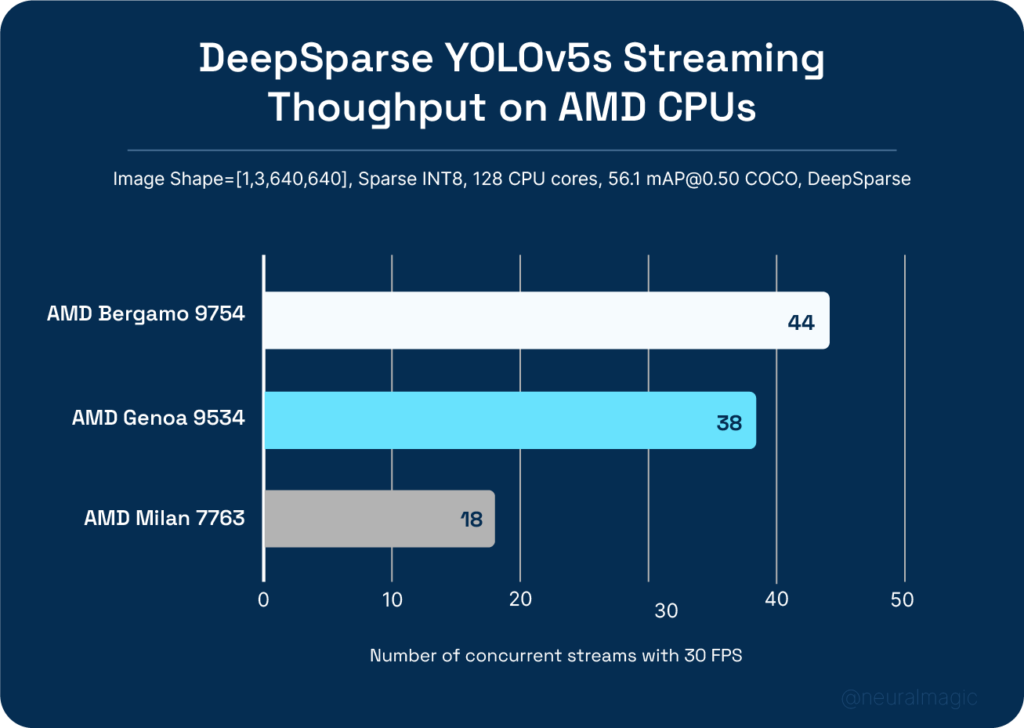

Benchmark tests with DeepSparse on the newest AMD EPYC processors reveal significant results. On YOLOv5s workloads, AMD EPYC 9004 Series processors, powered by “Zen 4c” cores, not only matched but outperformed the performance of previous 4th Gen AMD EPYC processors, realizing more streams of image object detection with a maximum latency of 33ms (30 FPS) per stream. Remarkably, this was achieved on a single socket machine with a 128 core AMD EPYC 9754 CPU. The consolidation into one socket enables a simpler, more homogenous CPU platform, facilitating the scalability of workloads that require synchronization.

What's truly groundbreaking is the generational improvement that 4th Gen AMD EPYC processors demonstrate. Compared to their 3rd generation predecessors, AMD EPYC processors deliver a staggering 2.4x increase in performance*. This dramatic leap allows data centers to derive more AI performance within the same rack/power/monetary budget when using DeepSparse, markedly optimizing overall cost and energy efficiency.

Legacy data centers are designed to power and cool about 15 kW per server rack, with a recurring challenge of serving more customer workloads within the same physical constraints. AMD EPYC 97x4 Series processors address these constraints by offering a power-efficient way to pack more computational muscle into the same space. Furthermore, with DDR5 memory as a considerable part of a server's expense, the reduced need for memory lanes in a single-socket design helps cut per-core costs. This is yet another compelling reason why AMD EPYC 97x4 Series processors, combined with DeepSparse, are an enticing choice to maximize performance-per-dollar in your data center.

For more information on the latest 4th Gen AMD EPYC processors, visit AMD.

AMD, the AMD arrow logo, EPYC, AMD 3D V-Cache and combinations thereof are trademarks of Advanced Micro Devices, Inc.

* Neural Magic measured results on AMD reference systems as of Q1FY23. Configurations: 1P EPYC 97x4 128 core vs. 2P EPYC 9534 64 core vs. 2P EPYC 7763 64 core running on Ubuntu 22.04 LTS, Python 3.9.13, deepsparse==1.3.2. YOLOv5s Streaming Throughput (image shape [3, 640, 640], batch 1, multi-stream, per-stream latency <=33ms) using COCO dataset. Testing not independently verified by AMD.