Sep 22, 2023

Author(s)

As artificial intelligence (AI) and machine learning (ML) have become the backbone of technological innovation, companies race to provide the best solutions for businesses to increase optimization, efficiency, and scalability. Our founders launched Neural Magic so customers didn’t have to hit the same roadblocks they encountered, when it came to utilizing maximum hardware capabilities for AI initiatives and research. Their commitment to this mission is reinforced by their continued collaboration with AMD, combining our AI-delivered software solution, DeepSparse, with the recent launch of AMD EPYC™ 8004 processors, to create a new standard of AI inference performance. This collaboration supports the seamless deployment of popular ML applications such as object detection and sentiment analysis, for industries like telecommunications, retail, and use cases like edge computing, so customers can take advantage of specialized optimizations offered by the 4th Gen AMD EPYC family.

Take AI to the Next Level with Neural Magic

Neural Magic is dedicated to helping companies tap into the full potential of their ML environments. This has become critical as neural networks continue to grow in size, increasing operational complexity and cost. DeepSparse is our sparsity-aware inference runtime, designed to provide power-efficient AI performance on processors, from the cloud to the edge. With Neural Magic, customers can use compute and memory-intensive models on existing CPU infrastructure to achieve performance previously only available with hardware accelerators.

Amplify Capabilities with AMD EPYC 8004

The launch of the AMD EPYC 8004 Series CPUs is designed to finalize the AMD EPYC fourth generation product line, supporting workloads that require optimal energy efficiency and balance performance. This launch targets lower power servers and platforms to minimize the total cost of ownership (TCO) and optimize performance per watt. The new AMD EPYC 8004 processor features up to 64 "Zen 4c" cores and offers numerous benefits like 96 PCIe Gen 5.0 lanes and 6-channel DDR5 memory. These chips are set to revolutionize entry servers with a more TCO-optimized solution. And with a Thermal Design Power (TDP) that ranges between 70-225W, these CPUs are energy-efficient while delivering robust performance.

"AMD is pleased to see the results Neural Magic has achieved on the AMD EPYC 8004 Series CPU” said Kumaran Siva, corporate vice president of strategic business development, server business unit at AMD, “DeepSparse combined with EPYC 8004 Series CPUs enable performant software-based inferencing that is optimized for performance per watt.”

Unparalleled Performance and Efficiency

DeepSparse takes advantage of the new AVX-512 and VNNI ISA extensions embedded in the 8004 Series, to produce exceptional results with AI inference performance levels for AI-powered applications and services. Initial results are impressive, showcasing a 21.6x throughput improvement for BERT-base and a 10.1x boost for YOLOv5s over the baseline ONNXRuntime at batch size 64.

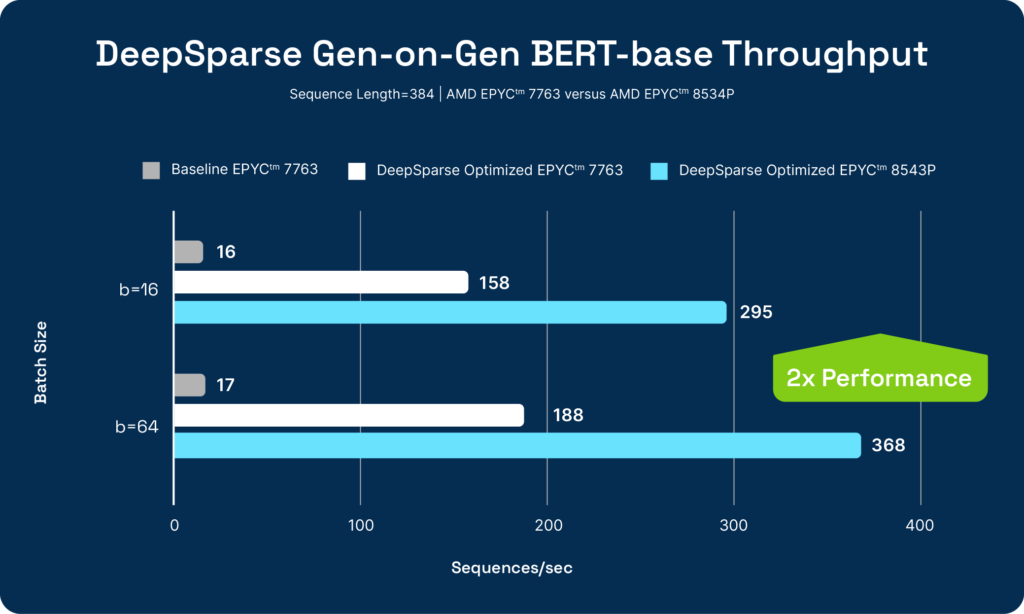

In a head-to-head comparison between the AMD EPYC 7763 and the AMD EPYC 8534P, Neural Magic's DeepSparse showcased a remarkable capacity to double the performance efficiency, executing identical models with greater effectiveness. This enhancement is further accentuated when considering the TDP differential between the two processors. While the AMD EPYC 8534P operates at a TDP of 225W, the 7763 requires a larger TDP of 280W, highlighting an impressive 2.48x performance/watt energy-efficiency gain. This illustrates not only a leap in computational prowess but also a significant stride in energy optimization, embodying a future where high performance does not mean there has to be a compromise on energy efficiency. So together, the new AMD EPYC 8004 Series CPUs and DeepSparse, provide customers with accelerated performance and a green, cost-effective option to utilize as a part of their overall AI infrastructure strategy.

Conclusion

The synergy between Neural Magic's DeepSparse and AMD EPYC 8004 Series processors is proof that customers can tackle AI initiatives without breaking the bank. The advancements with AI inference performance, characterized by increased optimization, efficiency, and scalability, give customers infrastructure options, without an increase in costs and complexity.