Jan 16, 2025

Welcome to our second edition of the monthly vLLM Newsletter! We are excited to continue sharing updates about the project, new features, and opportunities to engage with the vLLM community. Check out the December Newsletter here.

Keep on reading for exciting updates. And please forward this email to others who may benefit!

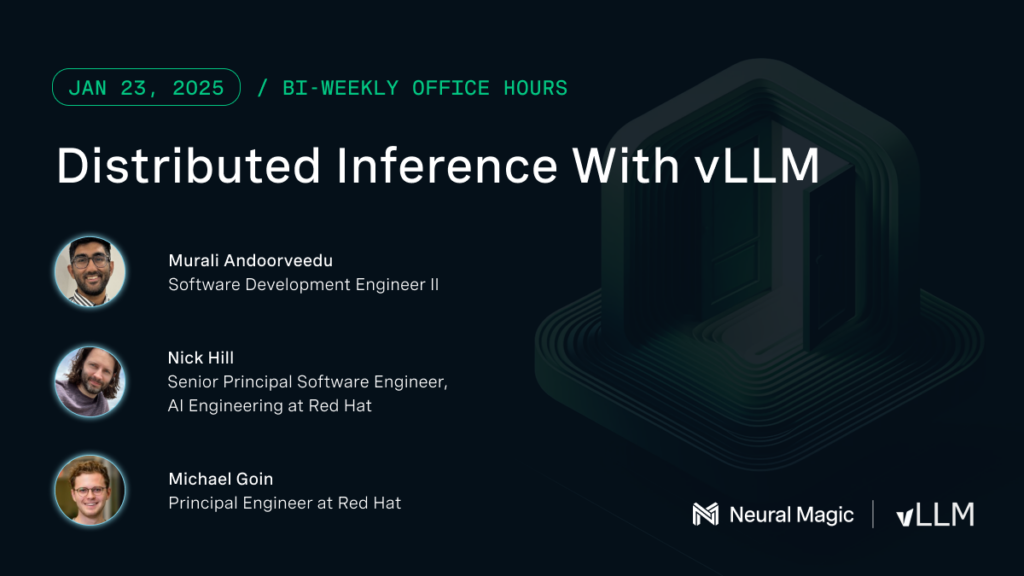

Upcoming Bi-Weekly vLLM Office Hours

Distributed Inference With vLLM | January 23, 2025 - 2:00PM ET / 11:00AM PT

Join our upcoming vLLM Office Hours as we dive into distributed inference with vLLM. We'll explore common pitfalls, practical implementation strategies, and steps to get started, with insights tailored to real-world challenges like those discussed here.

Recent Recordings

vLLM’s 2024 Wrapped and 2025 Vision

vLLM v0.6.6 Update & Open Discussion

Blogs

Structured Decoding in vLLM: A Gentle Introduction

vLLM is the high-throughput and efficient inference engine for running large-language models (LLMs). In this post, we will explore the annotated history of language models, describe the current state of structured decoding in vLLM, as well as the recent integration with XGrammar, and share our tentative roadmap for future improvements.

Keep Reading

vLLM 2024 Retrospective and 2025 Vision

The vLLM community achieved remarkable growth in 2024, evolving from a specialized inference engine to becoming the de facto serving solution for the open-source AI ecosystem. Celebrate vLLMs 2024 achievements and get a sneak peek into the 2025 roadmap.

Keep Reading

Installing and Developing vLLM with Ease

The field of LLM inference is advancing at an unprecedented pace. With new models and features emerging weekly, the traditional software release pipeline often struggles to keep up. With vLLM, we aim to provide more than just a software package. We are building a dynamic ecosystem that adapts to this rapid evolution, offering developers the tools, documentation, and community support they need to stay ahead.

Keep Reading

2:4 Sparse Llama FP8: SOTA Performance for NVIDIA Hopper GPUs

Advancing AI efficiency is more critical than ever, and sparsity has proven to be a cornerstone in this pursuit. Building on our previous work at Neural Magic with the 2:4 Sparse Llama 3.1 8B foundation model–which increases model efficiency by eliminating unnecessary parameters while preserving accuracy–we are excited to introduce the next step forward: Sparse 8-bit floating point (FP8) models and the associated high-performance kernels for vLLM.

Keep Reading

Events

1️⃣ The Year of Full-Stack OSS AI!

Optimizing LLMs for Cost-Efficient Deployment with vLLM

Michael Goin, Neural Magic [Red Hat]

Deploying LLMs is just the starting point; optimizing them for cost-efficient, high-performance serving is the real challenge. In this talk, we’ll explore cutting-edge compression techniques and advanced inference system optimizations that enable fast performance on your hardware of choice. Discover practical strategies and tools enterprises trust to scale deployments while minimizing costs.

2️⃣ West Coast vLLM Meet Up

The first vLLM meetup in 2025 is on Wednesday, January 22nd in San Francisco. We will discuss vLLM's performant V1 architecture, Q1 roadmap, and Google Cloud's innovation around vLLM: networking, Cloud Run, Vertex, and TPU!

3️⃣ First-Ever East Coast vLLM Meetup

It’s happening on March 11, 2025, in Boston! More details coming in early February.

In Other News

It’s official! Red Hat completed the acquisition of Neural Magic! By acquiring Neural Magic, a leading commercial contributor to vLLM, Red Hat aims to continue supporting the vibrant vLLM community and enhancing Red Hat AI’s ability to support gen AI deployments anywhere and everywhere across the hybrid cloud. Read more on the completed acquisition here.

vLLM is nearing 34,000 stars! 🌟 Be sure to add your star and join the community. Thank you for your support.