Explore Our Latest Insights

Bringing the Neural Magic to GPUs

Announcing Community Support for GPU Inference Serving Over the past five years, Neural Magic has focused on accelerating inference of deep learning models on CPUs. To achieve this, we did two things: Many of the techniques we used to accelerate CPUs to make them more efficient can also help GPUs in their processing of LLMs.… Read More Blog

Announcing Community Support for GPU Inference Serving Over the past five years, Neural Magic has fo...

03.05.2024

Pushing the Boundaries of Mixed-Precision LLM Inference With Marlin

Key Takeaways In the rapidly evolving landscape of large language model (LLM) inference, the quest for speed and efficiency on modern GPUs has become a critical challenge. Enter Marlin, a groundbreaking Mixed Auto-Regressive Linear kernel that unlocks unprecedented performance for FP16xINT4 matrix multiplications. Developed by Elias Frantar at IST-DASLab and named after one of the… Read More Blog

Key Takeaways In the rapidly evolving landscape of large language model (LLM) inference, the quest f...

04.17.2024

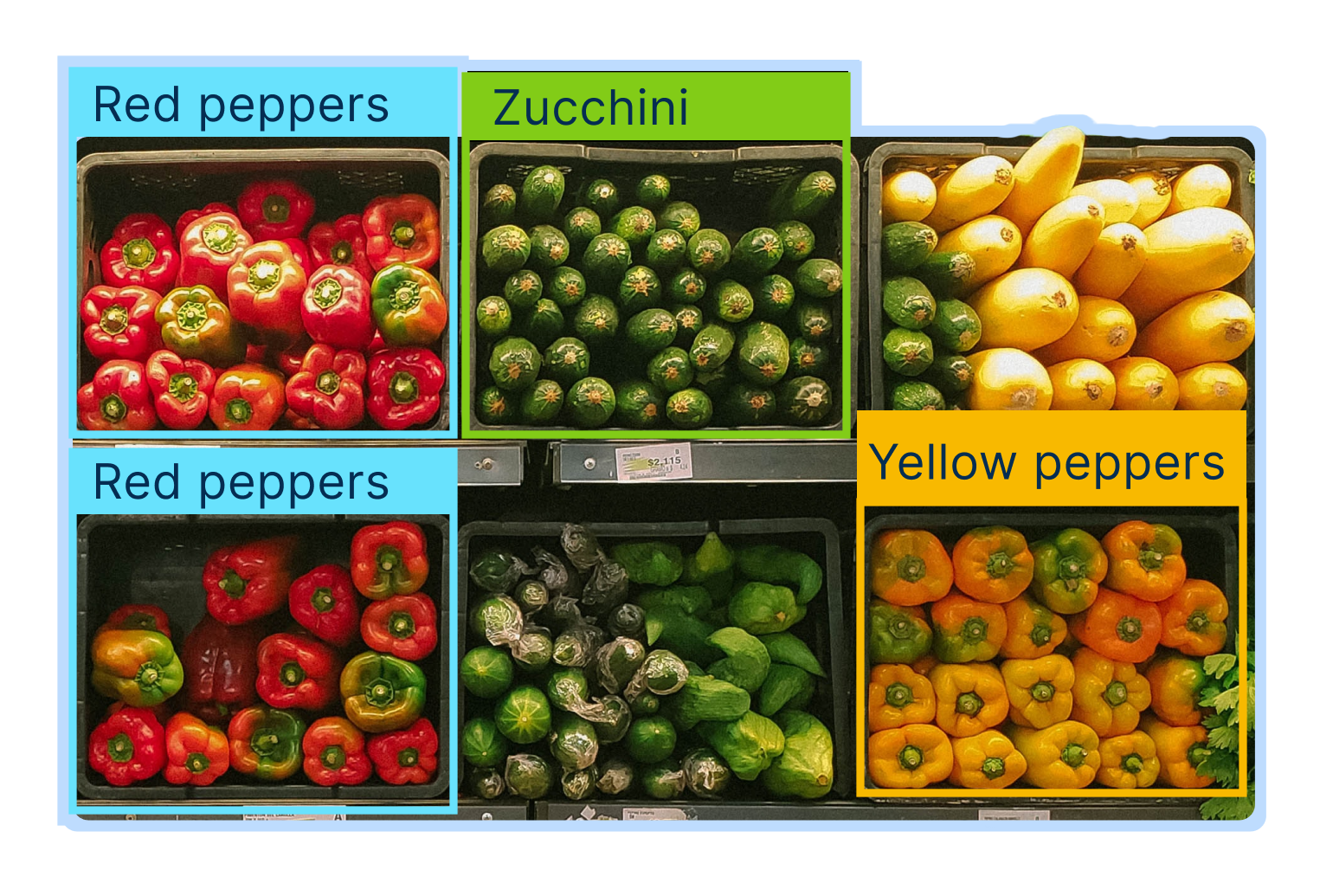

YOLOv8 Detection 10x Faster With DeepSparse—Over 500 FPS on a CPU

Introducing YOLOv8—the latest object detection, segmentation, and classification architecture to hit the computer vision scene! Developed by Ultralytics, the authors behind the wildly popular YOLOv3 and YOLOv5 models, YOLOv8 takes object detection to the next level with its anchor-free design. But it's not just about cutting-edge accuracy. YOLOv8 is designed for real-world deployment, with a… Read More Blog

Introducing YOLOv8—the latest object detection, segmentation, and classification architecture to h...

01.18.2023